To break it down a bit more, if you look at the space of all the things that you can do with the drone, the use cases can be divided into two distinct categories: piloted UAV flight in which the drone is controlled by a pilot with their hands on their joysticks, or there is autonomous flight with sensors and algorithms.

What the Chalmers researchers are specifically curious about is the environment and people that are interacting with the drone in some way, referred to as human-drone interaction, in which scholars are working within fields, such as sports, using drones as training devices or enhancing the spectator’s experience. Others are using drones to bring physical touch into virtual reality or projecting digital information. These concepts all boil down to human-robot interaction within the context of human-computer interaction and computer science.

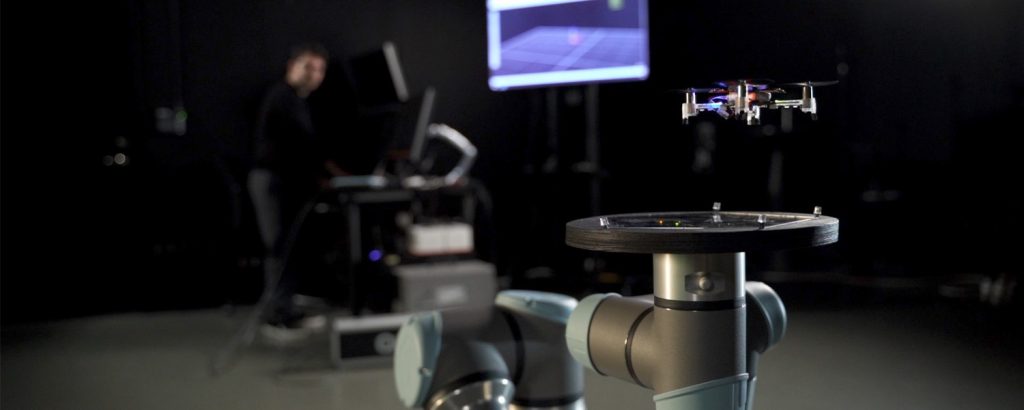

Their approach is to take philosophies and practices that are known to be useful in cultivating positive, healthy, benevolent qualities in ourselves. The Qualisys motion capture system is used to capture the motion of both humans and drones, either together or separately, as the group designs experiences with digital and robotic materials embodying the essence of these practices.

One example is a project called Drone Chi, which is a system for movement exercises performed together with a drone, inspired by Tai Chi, meant to be a meditative experience for cultivating focus and calm. In this project, the researchers used marker-based tracking to follow and control the drone in 3D space, as well as following the user’s hands via custom-designed 3D-printed hand pads.

The control algorithm, industrial design, and narrative aspects of the experience were developed by the project’s leader and principal designer Joseph La Delfa, PhD student at RMIT University in Melbourne, Australia. Throughout the project, La Delfa and the Chalmers team have been collaborating to produce research papers, exhibitions, and rich evolutions of the design.

The second example is the prototype Wisp that the Chalmers computer science researchers are working on for a breathing exercise, in which wearable sensors can detect breathing, as the drone moves with the breath in real time.

Led by PhD student, Mafalda Gamboa, the project investigates how a drone-based interactive experience might motivate people of all ages towards take up meditation and breathwork, and how a drone’s movement can be used in such exercises to guide and enhance the experience.

In a truly interdisciplinary project, the Chalmers team has been performing engineering and design experiments to build a stable, enjoyable drone product; as well as participating in trainings and experiences to better understand the practices and philosophies of meditation and breathwork.

The group uses motion capture to both control and analyze motions of the drone, as well as to capture human movement.

Using real-time and recording functions in tandem, the motion capture data becomes, at the same time, a material that is used to build an interactive experience, as well as a source of information they analyze for their research on how these interactions happen, and how we might design better ones in the future.

They also experiment with using different positioning systems and AI-based algorithms to eventually turn these prototypes into products which all of us can enjoy at our homes.

The Qualisys system gives us unmatched precision and versatility for developing indoor drone applications that we may find soon enough in our homes. We get excellent real-time performance for control algorithms, and extremely rich datasets for our human-centered research, at the same time.

Mehmet Aydın Baytaş

Human-Drone Interaction at Chalmers Univerisity with Qualisys

We want to thank the team at Chalmers University Computer Science Department and the T2i Lab, for sharing their work, and for using their Qualisys system to its full potential!

Mehmet Aydın Baytaş and Mafalda Gamboa‘s research is supported by the The Wallenberg AI, Autonomous Systems and Software Program – Humanities and Society (WASP-HS), as part of the project “The Rise of Social Drones: A Constructive Design Research Agenda” led by principal investigators Morten Fjeld and Sara Ljungblad.

If you are interested in having your lab featured as a Qualisys customer story, please submit a write up using the form below to share your story with us.